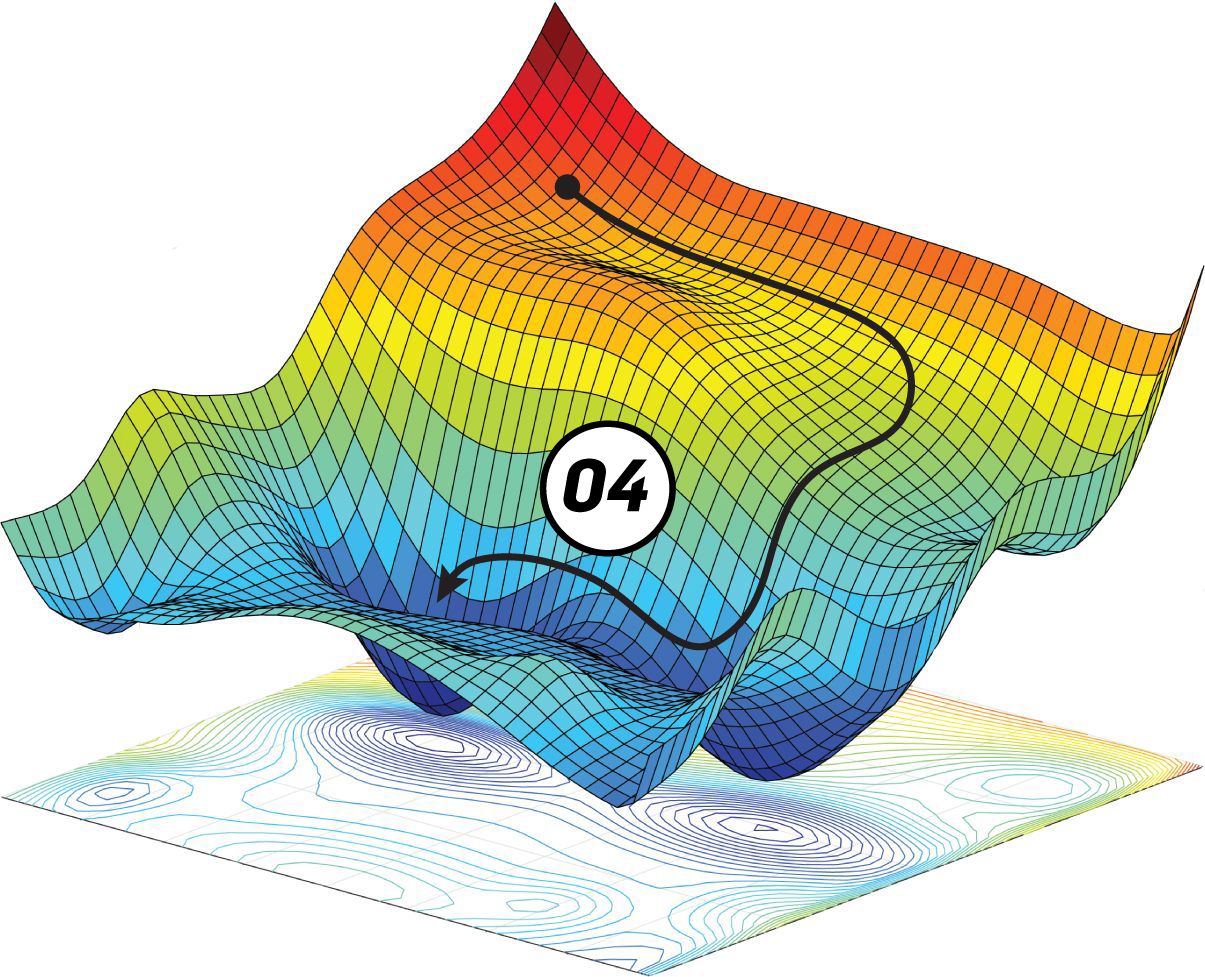

Gradient Clipping for Exploding GradientsĬarefully monitoring and limiting the size of the gradients whilst our model trains is yet another solution. For example, if we reduce the number of layers in our network, we give up some of our model’s complexity, since having more layers makes the networks more capable of representing complex mappings. This solution could be used in both the exploding and vanishing gradient problems, but requires good understanding and outcomes of the change. Saddle point comes into view when gradient descent runs in multidimensions. From the other perspective though, this critical point is actually the maximum cost value. Model learning stops (or becomes extremely slow) at this point, thinking that “minimum” has been achieved since the slope becomes zero (or very close to zero). Saddle point injects confusion into the learning process. Saddle point on the surface of loss function is a point where, from one perspective, that critical point looks like a local minima, while from another perspective, it looks like a local maxima. The weights grow exponentially and become very large, and derivatives stabilize. The architecture becomes unstable due to large changes in loss at each update step. This is the exact opposite of the vanishing gradients. Vanishing gradient does not mean the gradient vector is all zero (except for numerical underflow), but it means the gradients are so small that the learning will be very slow.Įxploding gradient occurs when the derivatives increase as we go backward with every layer during backpropagation.Gradient is the gradient of the loss function J with respect to each trainable parameter (weights w and biases, b).So, let's say if the gradients of the concluding layers are less than one, their multiplication vanishes very fast. The reason for vanishing gradient is that, during backpropagation, the gradients of inceptive layers are obtained by multiplying the gradients of concluding layers. So, if the gradients vanish, the learning will be quite slow. If the δJ/δw is very small, the learning will be very slow, since the changes in w will be very small.

Where J is the loss of the network on the current training batch. The neural networks are trained using the gradient descent algorithm: If the range of the initial values for the weights is not carefully chosen, and the range of the values of the weights during training is not controlled, a vanishing gradient would occur which is the main hurdle to learning in deep networks. Alternatively, if the derivatives are small, then the gradient will decrease exponentially as we propagate through the model until it eventually vanishes, and this is the vanishing gradient problem. If the derivatives are large, then the gradient will increase exponentially as we propagate down the model until they eventually explode, and this is what we call the problem of exploding gradient. In a network of n hidden layers, n derivatives will be multiplied together. With the chain rule, layers that are deeper in the network go through continuous matrix multiplications to compute their derivatives. When training a deep neural network with gradient descent and backpropagation, we calculate the partial derivatives by moving across the network from the final output layer to the initial layer. This loss is propagated backwards across every layer while updating the learning parameters for each neuron in every layer of the network. The predictions may differ from the actual values and hence we calculate loss J using a cost function which tells us how erroneous the predictions are. We propagate the input features forward in the network through various hidden layers, learning parameters (accounts for linear transformations) and activation functions (accounts for non-linear transformations), till we reach our final output layer. The learning process involves iterative forward and backward propagations.

In this article we will clarify why we need Gradient Descent, how it works, what are its shortcomings and what could be done about them.Īn overview of how the learning takes place in Neural NetworksĪ neural network is a network of interconnected neurons comprising three ingredients – weights (w), biases (b), and activation functions. The task becomes simple if the objective function is a true convex, which is not the case in the real world. It attempts to find the local minima of a differentiable function, taking into account the first derivative when performing updates of the parameters. Gradient Descent (GD) is one such first-order iterative optimization algorithm. In order to do so, the internal parameters, weights (w) and biases (b), need to be updated based on certain update rules or functions of the optimization algorithm. The objective of the optimization algorithm is to minimize the cost (J), to produce better and accurate predictions.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed